.

The advance of human intelligence and the vulnerability of its use in shaping — or distorting — civilization

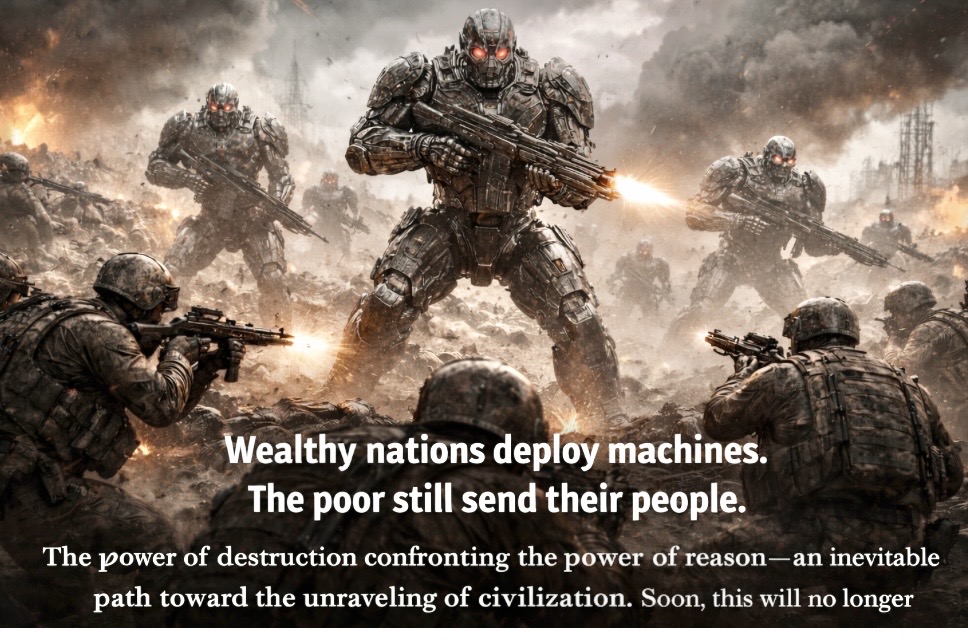

Wealthy nations deploy machines.

The poor still send their people.

In recent years — and even more explicitly in the latest proposals of the current administration — the United States has been consolidating a concrete and structured movement toward the incorporation of artificial intelligence into its military apparatus. The proposed federal budget for fiscal year 2027 includes a historic increase in defense spending, reaching approximately $1.5 trillion, with direct investments in advanced technological capabilities, including AI-based systems, automation, and next-generation strategic defense.

This direction is not merely aspirational: it represents a continuation of already institutionalized policies, such as the National Defense Authorization Act, as well as the accelerated expansion of contracts with technology companies for the development of artificial intelligence solutions applied to national security. At the same time, the Department of Defense has been explicitly prioritizing AI as a central axis of modernization, with significant budget increases in areas directly related to automation, data analysis, and autonomous systems.

In this context, the militarization of artificial intelligence definitively leaves the realm of speculative fiction and establishes itself as an objective reality, supported by political decisions, resource allocation, and technological advancements already in the implementation phase.

The rapid advancement of artificial intelligence and autonomous systems has introduced a new dimension to the nature of conflict — one that transcends traditional boundaries of strength, strategy, and human endurance.

What once belonged to the realm of fiction is now steadily approaching reality.

The battlefield is no longer defined solely by the courage, resilience, or sacrifice of those who stand on it. Increasingly, it is shaped by technological asymmetry — where a few, equipped with advanced systems, can exert overwhelming force over many.

This shift raises not only strategic concerns, but profound ethical questions.

As machines begin to assume roles historically reserved for human judgment, we are confronted with a critical dilemma:

how far can we delegate decisions that carry irreversible consequences?

The promise of artificial intelligence as a tool for progress stands in stark contrast to its potential use as an instrument of power.

It is not the technology itself that defines its impact, but the intentions and structures that guide its application.

Throughout history, human innovation has carried this duality — capable of building and destroying, of elevating and destabilizing. Artificial intelligence, however, amplifies this tension to an unprecedented scale.

When the power of destruction is enhanced by systems that operate with speed, precision, and growing autonomy, the balance between reason and force becomes increasingly fragile.

And within this imbalance lies a deeper risk:

the gradual erosion of moral accountability.

As decisions become mediated — or even executed — by machines, responsibility risks becoming diffused, obscured, or displaced. In such a scenario, the question is no longer only what can be done, but who truly answers for what is done.

The power of destruction confronting the power of reason is no longer a distant abstraction.

It is an unfolding reality.

An inevitable process of civilization’s deconstruction may emerge not from technological failure, but from the misalignment between human capability and human wisdom.

Artificial intelligence will not determine the future of humanity.

It will reflect it.

And perhaps the most pressing question of our time is not whether machines will surpass us in capability —

but whether we will rise to the level of responsibility required to guide them.

Soon, this will no longer belong to fiction.

Epilogue — A Reality Already in Motion

This is no longer a theoretical trajectory.

The militarization of artificial intelligence is already grounded in existing conceptual frameworks and supported by technologies that are not only viable, but actively evolving. What we are witnessing is not a distant possibility, but a process in full maturation — steadily advancing toward broader materialization in a timeline that is far closer than we may be willing to admit.

Autonomous systems, real-time data integration, algorithm-assisted decision-making, and unmanned platforms are no longer experimental constructs.

They are present, operational, and increasingly embedded within modern strategic infrastructures.

The question that remains open is no longer technical feasibility —

but ethical restraint.

The use of artificial intelligence under the logic of hegemonic interests — political, strategic, or military — places unprecedented pressure on humanity’s ability to maintain a rational and responsible application of its own creations.

When the pursuit of dominance overrides ethical consideration, technology ceases to be an instrument of progress and becomes a mechanism of imbalance — amplifying asymmetries and deepening vulnerabilities.

At this stage, the risk is no longer hypothetical.

It is structural.

And it challenges, at its core, the very notion of civilization as a project guided by reason.

If technological evolution continues to outpace ethical maturity, the consequences may not be immediate collapse — but something more subtle and perhaps more dangerous:

the gradual normalization of decisions made without conscience, executed without accountability, and justified without reflection.

______

Daniel Whitaker — Defense Technology Analyst

The article demonstrates a clear sense of strategic realism without falling into the trap of empty alarmism. On the contrary, it shows that the technological foundation already exists, that the integration between artificial intelligence and military systems is actively underway, and that the progression of this paradigm is no longer a question of “if,” but rather of “when” and “how.” This distinction is critical, as the subject is not treated as speculative fiction, but as a process already in maturation, which lends the argument substantial credibility.

What stands out most, however, is the underlying moral conflict at the core of the text.

It presents, in a compelling and reasoned manner, the contrast between rapid technological advancement and a comparatively stagnant ethical framework. The capacity for destruction continues to grow at an exponential rate, while the development of conscious, responsible decision-making does not keep pace. This imbalance reveals an inherent and perhaps unavoidable tension: technology advancing faster than human awareness and moral restraint.

This is the kind of analytical reflection that elevates a reasonable concern into the realm of a broader intellectual statement.